Two weeks ago, we published a paper in the Philosophical Transactions of the Royal Society. The paper is an economic model for inertial fusion and demonstrates a new design point for a power plant. Objectively the most important conclusion is probably that fusion can generate power for the same price as renewables, which are currently our cheapest source of energy. Fusion will never be “too cheap to meter”, that was always a pipedream, but it can be the cheapest source of baseload power.

Objectively, this probably is the most important message, but it’s what our new design point means for the engineering and physics challenges that I’m most excited about. It is natural to expect a direction with lower cost to be more difficult but, in this case, it is both lower cost and lower risk, for reasons I will try to explain.

All inertial fusion, in the most general sense, works in the same way and involves a big machine called a “driver”. At First Light this is a pulsed power machine that electromagnetically launches a projectile, like a railgun. At the National Ignition Facility, the driver is a laser, at Sandia National Lab, the driver is a different type of pulsed power machine.

The driver puts a huge amount of energy into a small “target” in a very short space of time. In First Light terms, the projectile hits the target. The target focusses the energy into fusion fuel, which burns very quickly and releases a pulse of energy.

It is less than one millionth of a second from the projectile hitting the target to the fusion event, and the pulse of energy released is shorter than a billionth of a second. The way you make continuous power with inertial fusion is to repeat the process again and again. Inertial fusion is a pulsed process; it’s like an internal combustion engine: inject fuel, spark, burn, reset.

When designing a power plant this means we have an extra free parameter. The same amount of power can be generated with a big energy release per shot and a slow frequency, or a smaller energy release and higher frequency; power is energy times frequency.

P = Ef

Given that we are free to pick, the crucial question is, which is the cheapest? A larger energy release might require a bigger driver, which will cost more, but the slower frequency means less targets are used, so they will cost less. What the paper does is build the simplest possible fully formed levelised cost model, accounting for all the main costs.

The levelised cost is the most common way to compare different energy technologies. Specifically, it is the price for which the energy would need to be sold to make the net present value of the investment zero. That is, on average you must sell the energy for more than this price to make a profit. The normal unit of levelised cost is dollars per megawatt-hour ($/MWh). To give some indicative numbers, solar and onshore wind are $35 / MWh, offshore wind $50 / MWh, gas $80 / MWh, and nuclear $100 / MWh.

The basic trade-off given above doesn’t seem too difficult, but it is more complex than just that. An example of a more complex issue is the lifetime of the driver, which is one of the difficult engineering challenges. Our current driver, Machine Three, uses spark gap switches with a lifetime of perhaps 1000 shots, nowhere near long enough for a power plant.

Even with a longer lifetime, one of the major costs in the model is the replacement of driver components as they wear out. This means that if the frequency is slower, the driver lasts proportionally longer, and this affects the final cost.

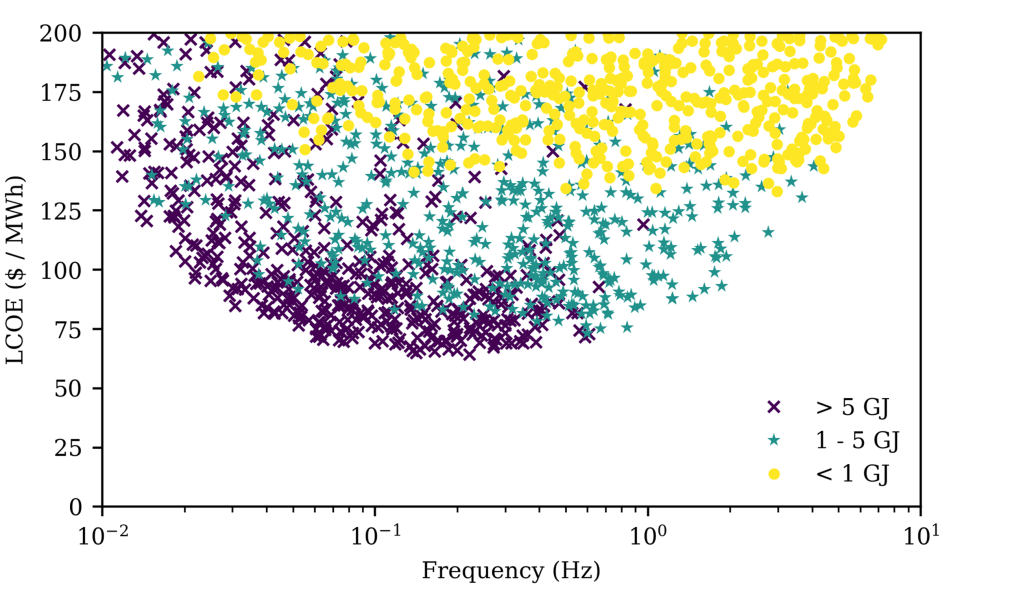

The model has fourteen parameters and the paper uses a Monte Carlo approach to explore how these parameters interact and affect the levelised cost. The key result is how the cost varies with the frequency.

The essential feature of The Monte Carlo method is randomness, in fact it is named after the Monte Carlo casino in Monaco for precisely this reason. The method is often used to assess integrals, but here is it used to explore the properties of the model. If the model had two parameters, we could pick a range of 20 values for each, and that would give 400 combinations. But it has fourteen, which would mean more than a billion billion combinations. This is the problem the Monte Carlo approach solves. The input parameters for the model are picked randomly from given ranges and the resulting cost is found. With a big enough sample size, the characteristics of the system can be understood whilst running the model far fewer times.

If you’re new to Monte Carlo analysis, this plot probably needs some explanation. The model has been run repeatedly, varying all of the parameters all at the same time. Even though everything is changing from one point to the next, we can still just plot the cost against any individual parameter such as frequency. When we do this, we don’t get a nice simple line, we get a cloud of points. There are many points with the same frequency but different costs, that is the influence of the other parameters. What we are interested in is the lower edge of the cloud. This is the minimum cost at that frequency regardless of everything else. What the lower edge of the cloud shows is that there is an optimum around 0.2 Hz.

Previous studies are, more often than not, discussing much higher frequencies, around 5 Hz. This seems surprising given the optimum we have just found. To see what is going on we need another piece of information, which is the energy per shot. What I’ve done is colour the points on the plot, putting them into three buckets, low, medium and high energy.

What this shows is that the optimum depends on the energy per shot. For low energy, the yellow points, the optimum is around 3 Hz. This energy per shot matches that assumed in previous studies. This is important because it gives us confidence in our model; the previous authors were at the optimum, but within their design space. The crucial thing that changes the conclusion is a larger energy per shot, something the First Light plant design can handle.

Digging in further also revealed something else very interesting. For low energy per shot, the plants near the optimum would be very big. I had a limit at 2 GW electrical (GWe) and these plants are right up against that limit. Higher energy can, counter-intuitively, lead to a smaller plant overall.

These findings, taken together, are huge. Not only is it simply cheaper, but everything about this new design point is also easier. The engineering risk is reduced on two major counts. First, the slower the better. Many of the engineering challenges specific to inertial fusion, including the driver lifetime, lessen as the frequency falls.

Second, the smaller the better. At a plant size of 150 MWe, the balance of plant is very low risk. Instead of being something bespoke, it is more likely to be something off the shelf, a design borrowed, which reduces risk hugely.

The smaller plant size also translates to a much lower capital cost, which makes financing a plant much easier. The upfront price tag is the other cost that matters, besides the levelised cost. Again, this couples back to the engineering risk because you can afford to iterate

A large driver and a high energy per shot also reduces the physics risk. I don’t have the space to substantiate that here, but it really does.

This is what excites me about the paper. The model points the way to something that could be very cost competitive, but this is about the destination. Everyone in fusion gets told the “20 years away and always will be” joke. The criticism that sits behind this line is not that fusion power isn’t worth it, but that it’s too difficult. What this paper has found is a road to the destination that is a lot easier to traverse, that is what is important here.

And the key thing, in the end, that makes all this work is a cheaper driver technology, which is exactly what First Light is trying to prove.

Check out this blog post by @FLF_Nick about @FLFusion power plant design. #fusion

Tweet